As I pointed out in my previous article about Google’s discrimination against non-binary people, tech doesn’t exist in a vacuum. What we create has an impact on people’s lives and affects even entire communities.

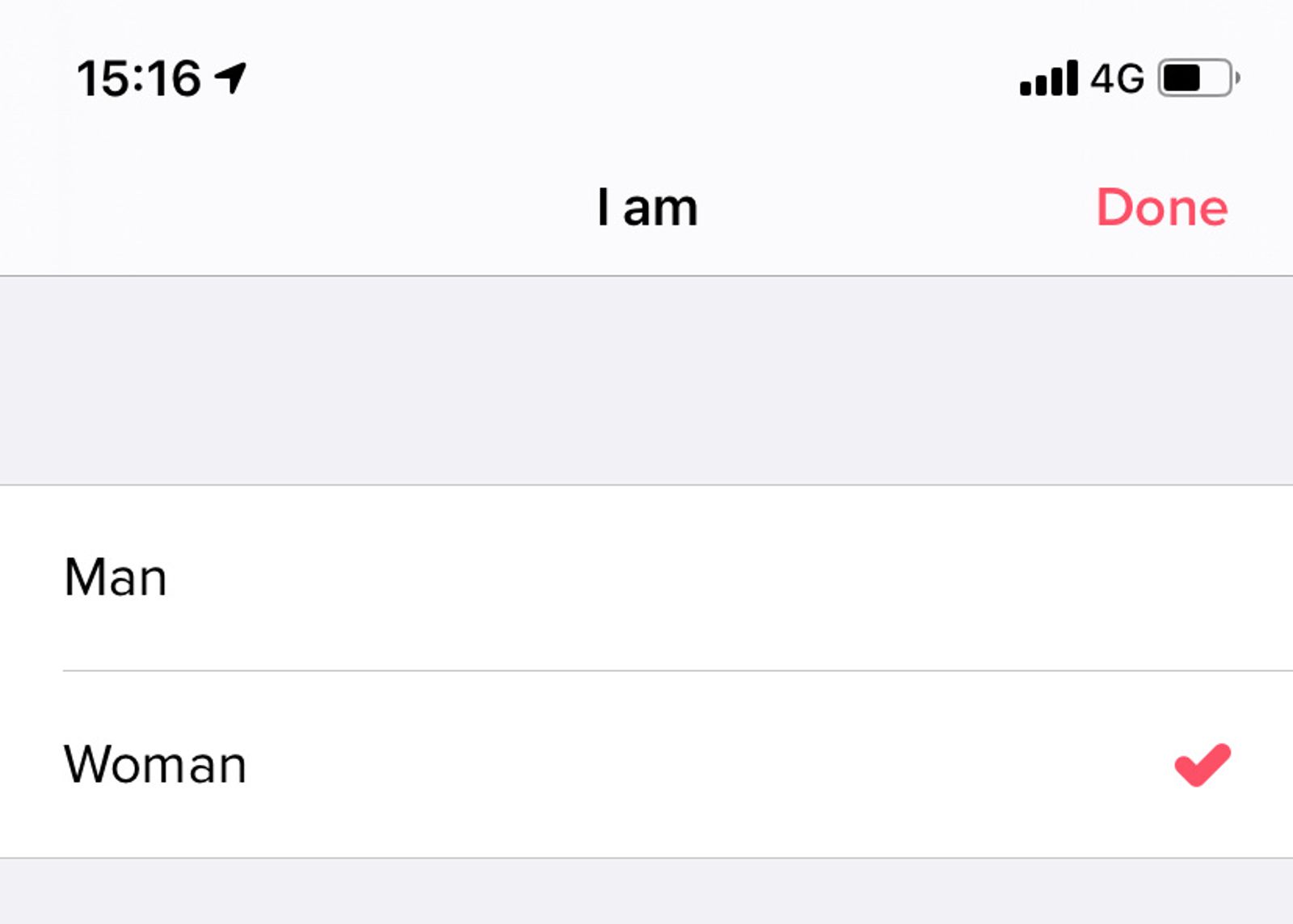

There’s a lot that can go wrong, and as a non-binary person I experience a lot of it on a daily basis (and wrote an entire article about it a while back). Having my pronouns in my profile on Twitter occasionally leads to harassment, most forms erase my identity completely, products don’t accommodate name changes, and hate speech and transphobia are everywhere.

While these are examples of how technology directly or indirectly causes harm to the trans community, even the seemingly “minor annoyances” become heavy for a community that already faces a lot of discrimination and hate related to their gender.

It’s not “just about not getting to pick our gender when signing up to Tinder” when your existence is constantly denied, erased or forgotten about everywhere in society.

According to Stonewall, more than 83% of young trans people have experienced verbal abuse or harassment, over 60% have experienced threats, 65% of trans people have been discriminated against and over half can’t receive health care from their GP.

What this means concretely for your work is entirely dependent on what you’re working on and who you’re building on. A platform like Facebook has different needs than, let’s say, accounting software or personal blogs.

There’s a lot all of us can be doing, regardless of what we’re building, to ensure we’re building trans inclusive products and services.

It all starts with education.

We can’t keep developing products without trying to understand how they impact different people, communities or the planet as a whole.

That’s why I believe we need more people with a background in humanities and social sciences in tech; the anthropologists, historians, sociologists, philosophers, and so on.

But more importantly, we all have the responsibility to educate ourselves, continuously. After all, we can’t design trans inclusive products if we don’t know, or care, about trans people, the impact technology has on them or the struggles they face.

There’s a lot to cover around what it means to be trans or non-binary, what our experiences are and how technology could better accommodate to us. It almost requires its own post (and I explained some things in my article about navigating the internet as a non-binary person), but here’s some material to get you started with:

- Transgender definition (non-binary wiki).

- Non-binary definition (non-binary wiki).

- What’s it like to be trans and live with gender dysphoria (article).

- Statistics by Stonewall (pdf).

- Beyond The Gender Binary (book).

Empowering trans voices.

We also have to keep in mind that education doesn’t overrule lived experiences. With transphobes denying our existence and popular news writing sensationalist fake news about us already, we don’t need “well-intended” allies to decide how we feel.

No, trans inclusive design shouldn’t just be the responsibility of trans people. And yes, we need allies, and everyone should put in the work. But that means listening to our needs and experiences, and empowering and uplifting our voices.

Even within our own community, it’s important to include and listen to people with different backgrounds. The experiences of a white trans man will be vastly different from those of a Black trans woman, even though they’re both trans.

For example, I’m gay and non-binary, have C-PTSD as a result of abuse during my childhood and am an immigrant in this country. But at the same time, I’m white, have a stable income, a healthy relationship and access to affordable health care (although the Norwegian system for getting hormone treatment and surgery does little to include non-binary people).

While I can say a lot about what it’s like to be non-binary, I also need to keep in mind I’m talking from the perspective of someone with my background, and other non-binary people’s experiences might be vastly different.

I recommend watching Tatiana Mac’s “How Privilege Defines Performance” talk, who explains it really well.

Uncovering potential harm

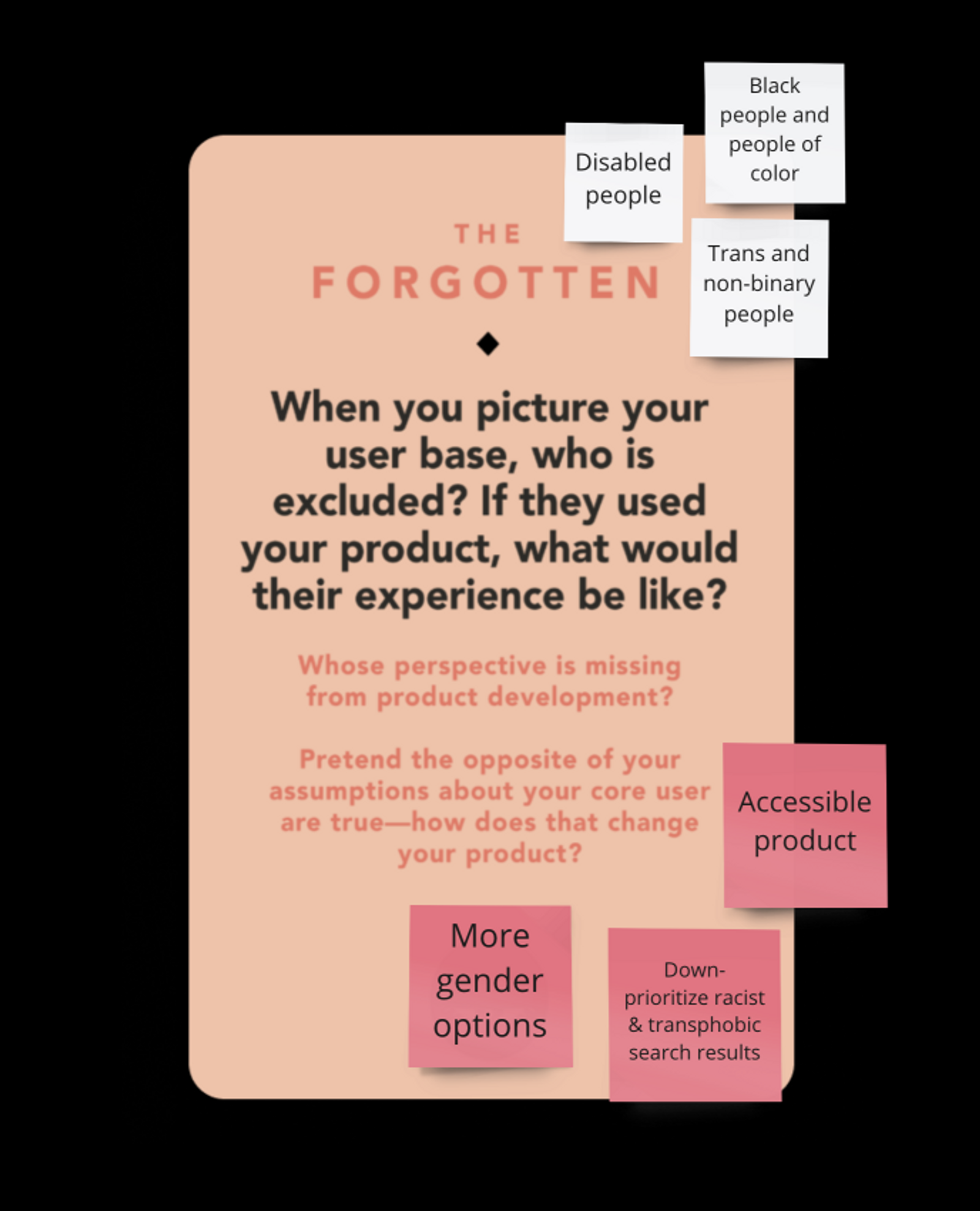

There are some really great tools out there to help us uncover what kind of harmful or unethical impact our product can have. Personally, I really like Tarot Cards of Tech and the Design Ethically toolkit, but I listed a lot more similar resources on EthicalDesign.guide.

Tarot Cards of Tech

The Tarot Cards of Tech are questions designed by Artefact to make us think about the impact and consequences of our technology.

The deck explores three different categories, which each have four cards with questions:

- Scale and disruption: What can go wrong if your product becomes popular, what would disappear and how would it impact the environment.

- Usage: how can people misuse your product and how do the different use cases affect people.

- Equity and access: who are we forgetting about, how can we empower underserved groups and create a safe environment for them.

The questions are quite general and I’d like to see a similar deck that covers some subjects a bit more in-depth, but they are framed in a very friendly way using accessible language (the site itself is not very accessible though, unfortunately, so I recommend checking out their PDF in addition). Sometimes this work might seem intimidating, and I really like that Tarot Cards of Tech makes it very approachable.

Questions like “When you picture your user base, who is excluded? If they used your product, what would their experience be like?” and “What could make people feel unsafe or exposed?” can make us think about how to give people from minoritized groups a safe and fair experience.

And looking at what the experience would be like if the product was used entirely opposite of how it was intended helps us detect those unintended consequences and prioritize impact over intent.

In the case of serving targeted ads based on gender, the opposite usage could be “excluding people from ads based on gender”, which is exactly what happened in Google’s case.

Design Ethically Toolkit

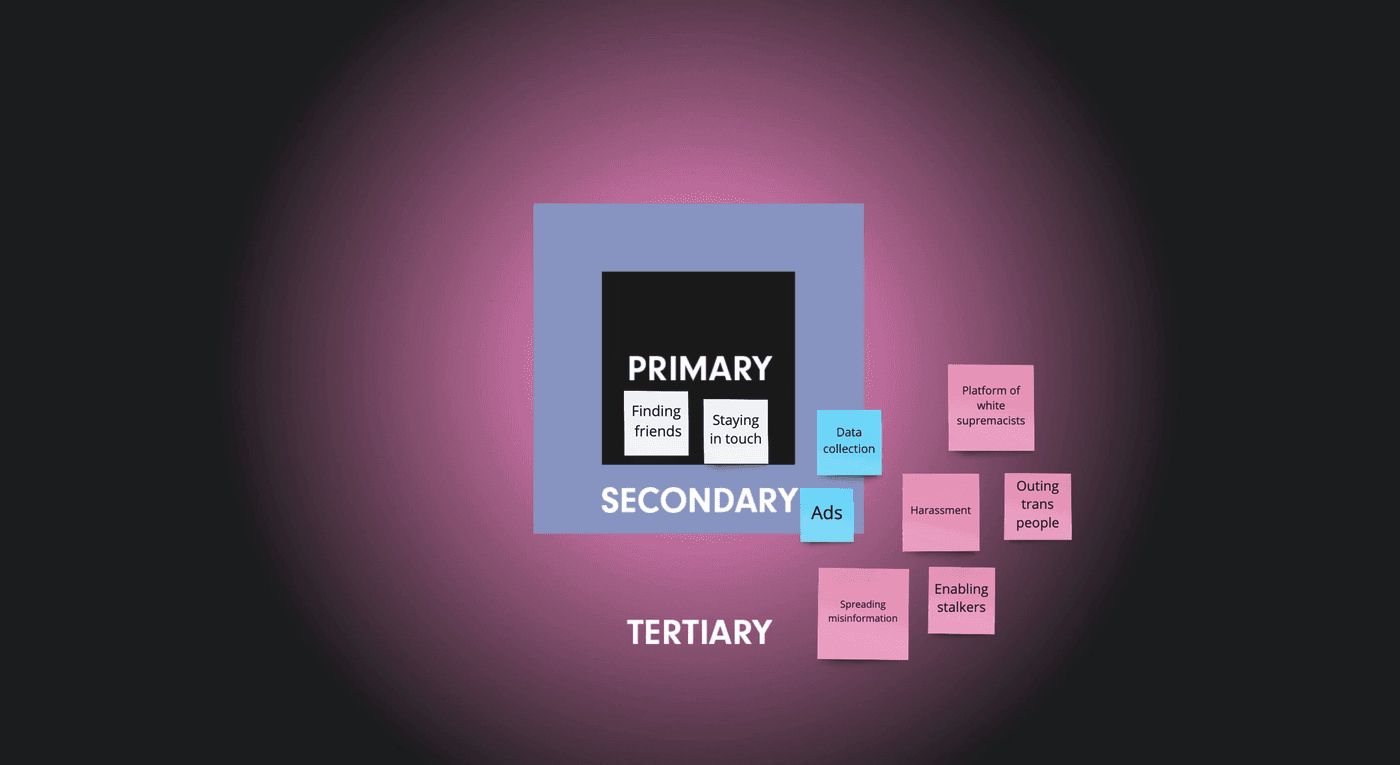

The Design Ethically Toolkit (by Kat Zhou) is a set of individual and group exercises, focused on evaluation, forecasting and monitoring.

One that I have found very useful before has been the Layers Of Effect, where we have to map out the primary, secondary and tertiary layers of effect.

The primary layer is the main use-case for the product, items in the secondary layer are aspects that don’t define the product but are known and relevant for the company, and the tertiary layer contains all the good or bad unintended consequences.

As I wrote in my previous post about Google, when products cause harm we like to think of that damage as “accidental”, “unintended” or “unforeseeable”, but exercises like these make those issues a lot more foreseeable and preventable.

Those with the privilege of creating products have the responsibility of defining ethical primary and secondary effects, as well as forecasting tertiary effects to ensure that they pose no significant harm.

- Kat Zhou / Design Ethically

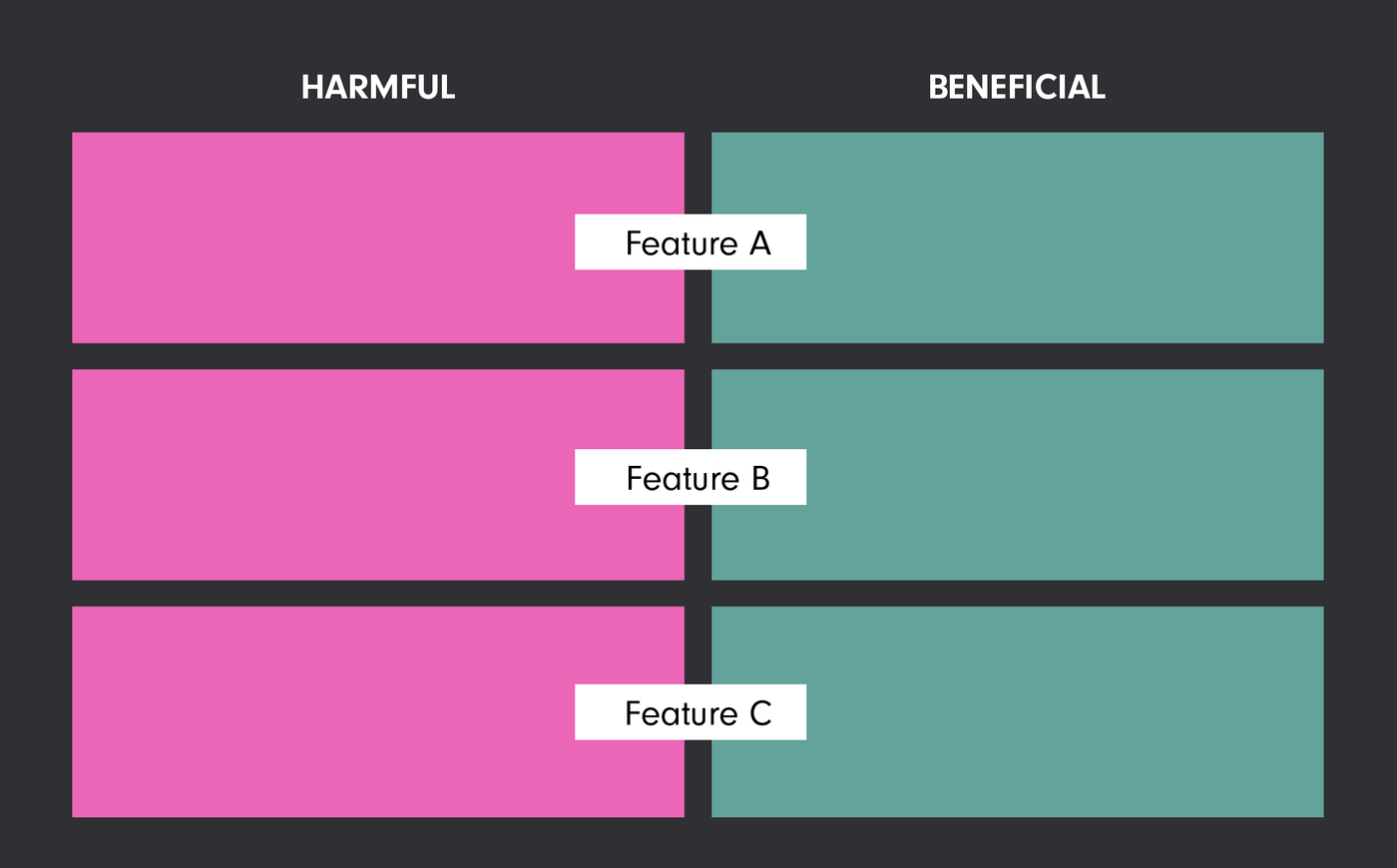

Another exercise from the toolkit I’ve frequently used before is their Dichotomy Mapping exercise, which is meant to highlight how positive aspects of each feature can become harmful when pushed to the extreme.

The example they give is how Facebook’s engagement ranked newsfeed was intended to show the most relevant content from friends and family, while in reality it ends up promoting the most controversial or clickbait-y content.

I’ve implemented a slight variation of this before when doing research for new features, where I expanded it to the following:

- Good examples of similar features

- Bad examples of similar features

- What are the potential positive effects of our feature?

- What are the potential negative effects, what can go wrong?

- What could we do to prevent the negatives?

Managing and monitoring risk.

I was first introduced to risk management a few years ago, when the company I was working for had to start documenting risk assessment and mitigation. I’m not sure how common it is to include ethics in the risk management process (I’ve mainly seen it used with regards to “technical” safety), but it definitely should be part of it.

We used Jira plugins, but there are plenty of other templates and tools out there that provide similar functionality.

In most of them, we’re expected to fill in something along the lines of:

- What is the risk?

- What causes it?

- What’s the chance of it happening?

- How big is its impact?

- How will we mitigate it?

The higher the chance and the larger the impact, the higher the severity of the issue. This can give us a prioritized list of the risks, and can be used to keep ourselves accountable.

Monitoring Checklist

The Design Ethically Toolkit has a great monitoring checklist as well. It has a list of questions people working on a product can use on an ongoing basis after launch.

Resources

- Transgender definition (non-binary wiki).

- Non-binary definition(non-binary wiki).

- Transgender definition (self-defined).

- Non-binary definition (self-defined).

- What’s it like to be trans and live with gender dysphoria (article).

- Statistics by Stonewall (pdf).

- Beyond The Gender Binary (book).

- pronoun.is (wiki).

- Why is it "pronouns" and not "preferred pronouns" (instagram post).

- Why is it "they are non-binary" and not "they identify as non-binary" (instagram post).

- Your Words Matter: The Importance of Pronouns in the World and the Workplace (article).

- Navigating the internet as a non-binary designer (article).

- Excluding non-binary people by design: How sign-up forms can lead to discrimination (article).

- Thoughts on Genderify, gender discrimination, transphobia, and (un)ethical AI (article).

- Marginalized by design (article).

Design tools

- Tarot Cards of Tech, a toolkit by Artefact.

- Design Ethically, a toolkit by Kat Zhou.

- Risk Management for Jira, a Jira plug-in.

- Agile Risk Management, another Jira plugin.

- Self-defined, a dictionary by Tatiana Mac.

- The Gender Spectrum Collection, a stock photo library by Vice.

- Ethics for designers, a toolkit by Jet Gispen.

- Disabled and Here queer section, a stock photo library by Affect.

Hi! 👋🏻 I'm Sarah, a self-employed accessibility specialist/advocate, front-end developer, and inclusive designer, located in Norway.

I help companies build accessibile and inclusive products, through accessibility reviews/audits, training and advisory sessions, and also provide front-end consulting.

You might have come across my photorealistic CSS drawings, my work around dataviz accessibility, or my bird photography. To stay up-to-date with my latest writing, you can follow me on mastodon or subscribe to my RSS feed.

💌 Have a freelance project for me or want to book me for a talk?

Contact me through collab@fossheim.io.

Similar posts

Thursday, 16. July 2020

Navigating the internet as a non-binary designer

Wednesday, 29. July 2020